We Used Claude Agent to Train an Open LLM!

At TestFlow, we're not just building AI-powered hardware validation. We're literally using AI agents for everything — marketing, product development, sales, customer success, and yes, even fine-tuning the models themselves. Here's how we did it, and how you can too.

We gave AI agents the ability to run every function at TestFlow. Not just write code or generate content — but to actually orchestrate marketing campaigns, qualify sales leads, build product features, manage customer onboarding, and fine-tune the very language models that power our platform. This post shows you how it works and why we believe this is the future of every company.

AI coding agents like Claude Code can use "skills" — packaged instructions, scripts, and domain knowledge — to accomplish specialized tasks. But we took this concept much further. We built skills for every department. Marketing agents that generate and schedule campaigns. Sales agents that research prospects and draft personalized outreach. Product agents that write specs, build features, and ship code. And yes — training agents that fine-tune open-source LLMs on cloud GPUs.

💡 The TestFlow Agent Philosophy

Every repeatable process in the company gets an agent. Every agent gets a skill. Every skill gets better over time. The result? A team of 10 that operates like a team of 100.

With this approach, you can tell an agent things like:

And the agent will:

- Validate your dataset format

- Select appropriate hardware (t4-small for a 0.6B model)

- Generate and configure the training script with monitoring

- Submit the job to cloud GPUs

- Report the job ID and estimated cost

- Check on progress when you ask

- Help you debug if something goes wrong

The model trains on cloud GPUs while you do other things. When it's done, your fine-tuned model appears on the Hub, ready to deploy into TestFlow's validation pipeline. This isn't a toy demo. The skill supports the same training methods used in production: supervised fine-tuning, direct preference optimization, and reinforcement learning with verifiable rewards.

The TestFlow Agent Stack: Every Department, Automated

Here's how we've deployed agents across every function at TestFlow. Each agent is powered by skills — structured knowledge packs that teach the agent exactly what it needs to know for that domain.

Marketing Agent

Generates blog posts, social media campaigns, email sequences, and SEO content. It knows our brand voice, target audience (semiconductor engineers), and competitive positioning.

Product Agent

Writes feature specs, generates code, builds UI components, creates test cases, and ships to production. It understands our entire codebase, design system, and product roadmap.

Sales Agent

Researches prospects, drafts personalized outreach, qualifies inbound leads, prepares demo scripts, and follows up automatically. It knows our ICP, pricing, and competitive landscape.

Model Fine-Tuning Agent

Fine-tunes open-source LLMs for our specific use cases — semiconductor test generation, validation analysis, and hardware diagnostics. Handles the full lifecycle from data prep to deployment.

Setup and Install

Before starting with model fine-tuning, you'll need:

- A Hugging Face Pro or Team / Enterprise plan (Jobs require a paid plan)

- A write-access token from huggingface.co/settings/tokens

- A coding agent like Claude Code, OpenAI Codex, or Google's Gemini CLI

Hugging Face skills are compatible with Claude Code, Codex, and Gemini CLI, with integrations on the way for Cursor, Windsurf, and Continue.

Claude Code

1. Register the repository as a marketplace plugin:

2. To install a skill, run:

Codex

Codex identifies skills via the AGENTS.md file. Verify instructions are loaded with:

Gemini CLI

Install locally:

Or use the GitHub URL:

Connect to Hugging Face

Authenticate with your Hugging Face account using a write-access token so the job can create a model repo.

For Claude Code, configure the MCP Server:

Your First Training Run

Let's walk through a complete example. We'll fine-tune a small model to see the full workflow, then explore more advanced capabilities.

Instruct the Coding Agent to Fine-Tune

Start with a simple and clear instruction to fine-tune a specific model:

The coding agent analyzes your request and prepares a training configuration. For a 0.6B model on a demo dataset, it selects t4-small — enough GPU for this model size and the cheapest option available. This works for vision language models too! You can simply run "Fine-tune Qwen/Qwen3-VL-2B-Instruct on llava-instruct-mix."

Review Before Submitting

Before the agent submits anything, you'll see the configuration:

I'll fine-tune Qwen/Qwen3-0.6B on open-r1/codeforces-cots using SFT.

Configuration:

- Hardware: t4-small (~$0.75/hour)

- Estimated time: ~20 minutes

- Estimated cost: ~$0.30

- Output: username/qwen-codeforces-cots-sft

The model will be pushed to Hub automatically. Should I submit?

This is your chance to adjust anything. Change the output repo name, pick different hardware, or ask the agent to modify training parameters. Once you approve, the agent submits the job. For example, you can ask: Do a quick test run on 100 examples.

Track Progress

After submission, you get job details:

✅ Job submitted successfully!

Job ID: abc123xyz

Monitor: https://huggingface.co/jobs/username/abc123xyz

Expected time: ~20 minutes

Estimated cost: ~$0.30

View real-time metrics at: https://huggingface.co/spaces/username/trackio

The skill includes Trackio integration so you can watch training loss decrease in real-time. Jobs run asynchronously — close your terminal and come back later. When you want an update:

Use Your Model

When training completes, your model is on the Hub:

# Python

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained("username/qwen-codeforces-cots-sft")

tokenizer = AutoTokenizer.from_pretrained("username/qwen-codeforces-cots-sft")

That's the full loop. You described what you wanted in plain English, and the agent handled GPU selection, script generation, job submission, authentication, and persistence. The whole thing cost about thirty cents.

Training Methods

The skill supports three training approaches. Understanding when to use each one helps you get better results.

Supervised Fine-Tuning (SFT)

SFT is where most projects start. You provide demonstration data — examples of inputs and desired outputs — and training adjusts the model to match those patterns. Use SFT when you have high-quality examples of the behavior you want: customer support conversations, code generation pairs, domain-specific Q&A, or semiconductor test procedures.

For models larger than 3B parameters, the agent automatically uses LoRA (Low-Rank Adaptation) to reduce memory requirements.

Direct Preference Optimization (DPO)

DPO trains on preference pairs — responses where one is "chosen" and another is "rejected." This aligns model outputs with human preferences, typically after an initial SFT stage. Use DPO when you have preference annotations from human labelers or automated comparisons.

DPO is sensitive to dataset format. It requires columns named exactly chosen and rejected. The agent validates this first.

Group Relative Policy Optimization (GRPO)

GRPO is a reinforcement learning method proven effective on verifiable tasks like solving math problems, writing code, or any task with a programmatic success criterion — including hardware test validation.

The model generates responses, receives rewards based on correctness, and learns from the outcomes. More complex than SFT or DPO, but the configuration is similar.

Hardware and Cost

The agent selects hardware based on your model size, but understanding the tradeoffs helps you make better decisions.

Model Size to GPU Mapping

| Model Size | GPU | Est. Cost | Use Case |

|---|---|---|---|

| <1B params | t4-small | $1-2 | Educational / experimental |

| 1-3B params | t4-medium / a10g-small | $5-15 | Small production models |

| 3-7B params | a10g-large / a100-large + LoRA | $15-40 | Production with LoRA |

| 7B+ | Not suitable for HF Skills jobs | — | Use dedicated infra |

Demo vs Production

When testing a workflow, start small:

For production, be explicit:

⚡ Pro Tip

Always run a demo before committing to a multi-hour production job. A $0.50 demo that catches a format error saves a $30 failed run.

Dataset Validation

Dataset format is the most common source of training failures. The agent can validate datasets before you spend GPU time.

The agent runs a quick inspection on CPU (fractions of a penny) and reports:

Dataset validation for my-org/conversation-data:

SFT: ✓ READY

Found 'messages' column with conversation format

DPO: ✗ INCOMPATIBLE

Missing 'chosen' and 'rejected' columns

Monitoring Training

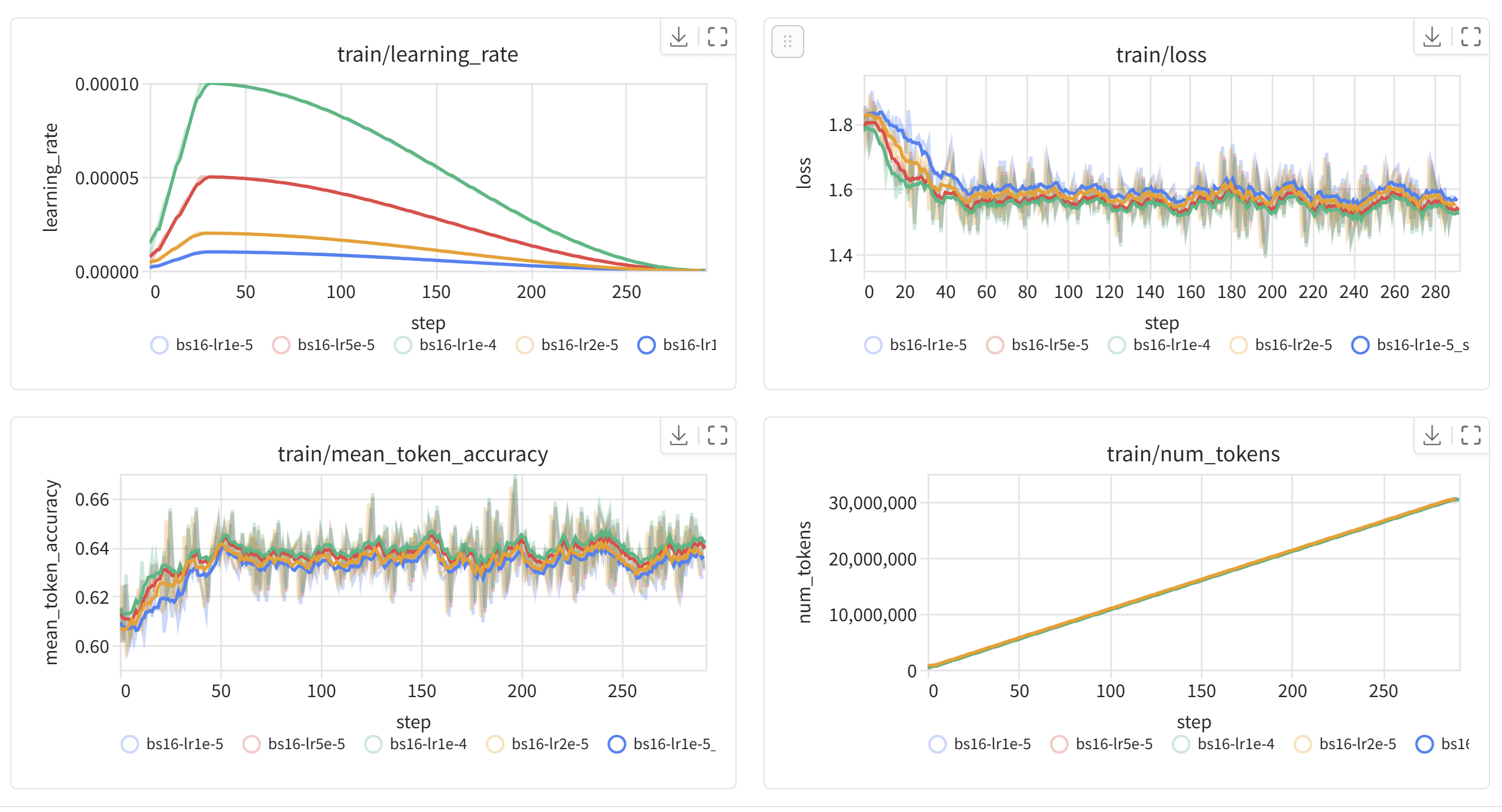

Real-time monitoring helps you catch problems early. The skill configures Trackio by default — after submitting a job, watch metrics at your Trackio dashboard. A healthy run shows steadily decreasing loss.

Ask the agent about status anytime:

Job abc123xyz is running (45 minutes elapsed)

Current step: 850/1200

Training loss: 1.23 (↓ from 2.41 at start)

Learning rate: 1.2e-5

Estimated completion: ~20 minutes

If something goes wrong, the agent helps diagnose. Out of memory? It suggests reducing batch size or upgrading hardware. Dataset error? It identifies the mismatch. Timeout? It recommends longer duration or faster training settings.

Converting to GGUF

After training, you might want to run your model locally. The GGUF format works with llama.cpp and tools like LM Studio, Ollama, etc.

The agent submits a conversion job that merges LoRA adapters, converts to GGUF, applies quantization, and pushes to Hub. Then use it locally:

Why This Matters: The Agent-First Company

At TestFlow, we're not using agents as a side experiment. We're building the company around them. Every function, every workflow, every repeatable process has an agent assigned to it.

Marketing

Blog posts, social campaigns, email sequences, SEO — all agent-generated, human-reviewed.

Product Development

Feature specs, code generation, UI components, test suites — agents build it, we ship it.

Sales

Prospect research, outreach drafting, lead qualification, follow-ups — all automated.

Model Fine-Tuning

SFT, DPO, GRPO — our agents fine-tune the very models that power TestFlow's validation engine.

The traditional startup playbook says you need to hire 50 people to cover marketing, sales, engineering, and operations. We think differently. We hire the best humans for judgment, creativity, and strategy — and let agents handle the execution at scale.

This is the future. Not "AI tools" tacked on to existing workflows. But AI agents as first-class team members, with their own skills, their own workflows, and their own accountability. And it starts with something as simple as telling your coding agent to fine-tune a model.

What's Next

We've shown that coding agents can handle the full lifecycle of model fine-tuning: validating data, selecting hardware, generating scripts, submitting jobs, monitoring progress, and converting outputs. But more importantly, we've shown that agents can power an entire company.

Some things to try:

- Fine-tune a model on your own dataset

- Build a preference-aligned model with SFT → DPO

- Train a reasoning model with GRPO on math or code

- Convert a model to GGUF and run it with Ollama

- Build your own department-specific agent skills

The skill is open source. You can extend it, customize it for your workflows, or use it as a starting point for other training scenarios.